- Microsoft Power Automate Community

- Welcome to the Community!

- News & Announcements

- Get Help with Power Automate

- General Power Automate Discussion

- Using Connectors

- Building Flows

- Using Flows

- Power Automate Desktop

- Process Mining

- AI Builder

- Power Automate Mobile App

- Translation Quality Feedback

- Connector Development

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read Only)

- Power Platform and Dynamics 365 Integrations (Read Only)

- Galleries

- Community Connections & How-To Videos

- Webinars and Video Gallery

- Power Automate Cookbook

- Events

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Community Blog

- Power Automate Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

- Microsoft Power Automate Community

- Community Blog

- Power Automate Community Blog

- Extract Text From Objects

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Extract text from Objects

Many organizations have schematic or industrial diagrams that contain standard objects that represent a component or industrial part. Often these components have text inside of them that provide information end users need without going back to the person who drafted the diagram. By using AI Builder in the Power Platform and Forms Recognizer in Cognitive Services, a Power Automate workflow can be configured to automatically use a trained model to extract text from an image so it can be indexed and retrieved in SharePoint.

Standard symbols

Within any industry, much like a spoken language, standard objects or symbols are adopted to convey meaning and have a common set of images to create solutions from. Typical examples might be a schematic diagram or industrial components.

These objects can contain text that further defines the specifications for that object, however when a user wants to search for a specific object and retrieve the text contained within it, that’s where AI technologies can be configured.

Schematic diagrams are a great use case on the varying types of objects that contain useful information.

Industrial diagrams also contain standard objects that when indexed can be easily searched.

Power Automate

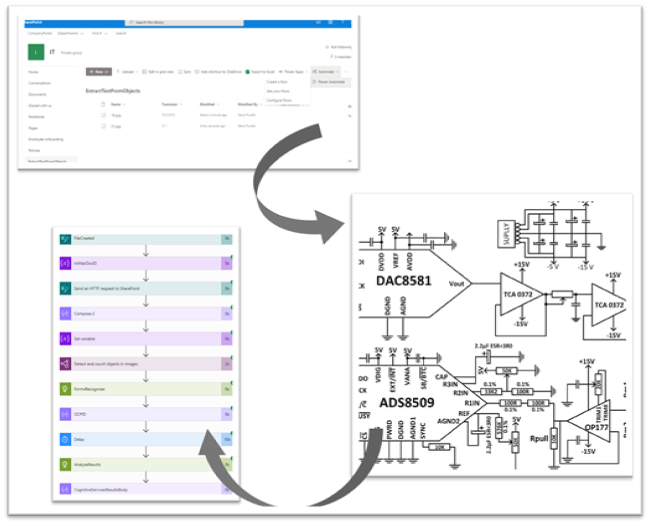

The core of solution relies in Power Automate. Activities can be configured to process the image and extract the text from the object.

It all starts in a SharePoint document library that has been configured with the metadata you want to extract.

To accomplish there are 2 main steps that need to be configured in Power Automate:

- Object Detection – use AI Builder in Power Apps to train a model that will recognize specific objects and return the pixel coordinates for later processing.

- Forms Recognizer in Cognitive Services – this process will conduct a full OCR of the image and return all the text including their pixel coordinates.

Object Detection

The first step uses AI Builder in Power Automate to train a model that will recognize the specific object we want.

AI builder steps to deploy a model are:

To begin building your model:

- Navigate to the AI Builder section of Power Apps, click on “Build”.

- Select the Object Detection template and give it a name.

- For the domain of the model, select “Common objects” and click next.

Tagging

AI Builder allows you to create tags that you then map to an object during the model training phase.

Create as many tags as necessary that can be assigned to objects in the image.

AI Builder requires a minimum of 15 images to train a model. The more images you have the better the accuracy of the model and higher confidence ratings.

For each image that is uploaded, you now need to identify the area that that contains the object you want to train. Once this area is selected, choose the correct tag in the context menu.

Continue to do this for all objects on the image that you want to identify. It doesn’t have to be pixel perfect however knowing the boundaries of the object will provide better text extraction later.

Once you have tagged all 15 documents, you can publish the model, which will make it available in Power Automate using the “Detect and count objects in images” activity.

The results of this activity is a .json file that we’ll be used later for further processing.

Form Recognizer in Cognitive Services

Now that we have a model that will recognize and tag objects in our image, we need another process that will OCR the entire image and return a .json file with the OCRed text and its pixel location. For this we turn to Azure.

- Create a new resource group in Azure

- In the resource group create a new resource using the Forms Recognizer service.

- Once the service has been created, navigate to it and copy the URL endpoint.

- You will also need one of the App keys.

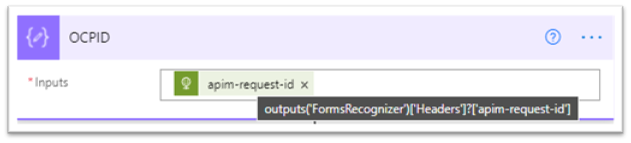

Now that we have things configured in Azure, we can re-focus on Power Automate. To use the Forms Recognizer service in Azure, it’s a 2 step process.

- Send the image to the Forms Recognizer endpoint we created above. The Forms Recognizer service will then begin processing the image. It will return a RequestID.

- Using this RequestID, we then make another call to the endpoint to retrieve the results of the Forms Recognizer service after it has had a chance to process the image.

The result of this processing will be a .JSON file that we will use for further processing.

Add a new HTTP activity to Power Automate and configure it as follows.

- Ocp-Apim-Subscription-Key must be in the header. It uses the value of one of the keys from the Forms Recognizer service we configured.

Store the RequestID that is returned form this call in a Data Operation

I put in a 10 second delay to give the Forms Processing service time to retrieve my results.

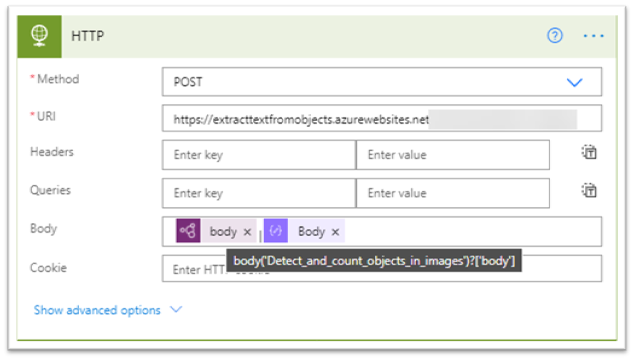

Configure another HTTP activity to get the results.

- Ocp-Apim-Subscription-Key must be in the header. It uses the value of one of the keys from the Forms Recognizer service we configured.

- Outputs – The RequestID from the initial Forms Recognizer call must be in the URL to retrieve the results.

Putting it all together

Now that we have the results from AI Builder and the pixels coordinates for the objects we want to target, we can now take the pixel coordinates from the Forms Recognizer service and see if they intersect with the object we tagged. If it does, we have a match and we can extract the text from the object. If it doesn’t, then we know there is no text in the object.

To analyze the two .json files that were produced, an Azure function will be called, passing in both .json files. The Azure Function will then determine if the pixel coordinates overlap and return .json results containing the text it found.

The results from the Azure function is a json response that can be used to update the SharePoint document library.

Store the string output in data operation.

Update the SharePoint list with the text from the json response.

Search Experience

Once the text that was extracted from the image and updated the metadata in SharePoint, the user will be able to search for the text and view the image in the results.

Resources

Download the solution artifacts from GitHub.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

-

Mr

-

Power Apps/Power Automate Developer

-

Technical Consultant

- Experienced Consultant with a demonstrated history of working in the information technology and services industry. Skilled in Office 365, Azure, SharePoint Online, PowerShell, Nintex, K2, SharePoint Designer workflow automation, PowerApps, Microsoft Flow, PowerShell, Active Directory, Operating Systems, Networking, and JavaScript. Strong consulting professional with a Bachelor of Engineering (B.E.) focused in Information Technology from Mumbai University.

-

Microsoft MVP

- I am a Microsoft Business Applications MVP and a Senior Manager at EY. I am a technology enthusiast and problem solver. I work/speak/blog/Vlog on Microsoft technology, including Office 365, Power Apps, Power Automate, SharePoint, and Teams Etc. I am helping global clients on Power Platform adoption and empowering them with Power Platform possibilities, capabilities, and easiness. I am a leader of the Houston Power Platform User Group and Power Automate community superuser. I love traveling , exploring new places, and meeting people from different cultures.

-

SharePoint, Microsoft 365 and Power Platform Consultant

- Read more about me and my achievements at: https://ganeshsanapblogs.wordpress.com/about MCT | SharePoint, Microsoft 365 and Power Platform Consultant | Contributor on SharePoint StackExchange, MSFT Techcommunity

-

Encodian Founder | O365 Architect / Developer

- Encodian Owner / Founder - Ex Microsoft Consulting Services - Architect / Developer - 20 years in SharePoint - PowerPlatform Fan

-

Microsoft MVP

- Founder of SKILLFUL SARDINE, a company focused on productivity and the Power Platform. You can find me on LinkedIn: https://linkedin.com/in/manueltgomes and twitter http://twitter.com/manueltgomes. I also write at https://www.manueltgomes.com, so if you want some Power Automate, SharePoint or Power Apps content I'm your guy 🙂

-

Developer/Consultant

-

Microsoft Biz Apps MVP

- I am the Owner/Principal Architect at Don't Pa..Panic Consulting. I've been working in the information technology industry for over 30 years, and have played key roles in several enterprise SharePoint architectural design review, Intranet deployment, application development, and migration projects. I've been a Microsoft Most Valuable Professional (MVP) 15 consecutive years and am also a Microsoft Certified SharePoint Masters (MCSM) since 2013.

-

Krishna Rachakonda

- Big fan of Power Platform technologies and implemented many solutions.

-

SharePoint Consultant

- Passionate #Programmer #SharePoint #SPFx #M365 #Power Platform| Microsoft MVP | SharePoint StackOverflow, Github, PnP contributor

-

Developer

-

Cloud Infrastructure Consultant

- Web site – https://kamdaryash.wordpress.com Youtube channel - https://www.youtube.com/channel/UCM149rFkLNgerSvgDVeYTZQ/

- emmanuelfrenot on: Regular Expressions within Power Automate

-

trice602

on:

Webpage-to-PDF with Power Automate Desktop!

trice602

on:

Webpage-to-PDF with Power Automate Desktop!

-

trice602

on:

One Minute Fixes - Summing up a field

trice602

on:

One Minute Fixes - Summing up a field

-

UshaJyothi20

on:

Simplify Date Operations using Power Fx Functions ...

UshaJyothi20

on:

Simplify Date Operations using Power Fx Functions ...

-

wyattdave

on:

One Minute Fixes - Can't Call a Flow from a Power ...

on:

One Minute Fixes - Can't Call a Flow from a Power ...

-

Joseph_Fadero

on:

Interpolated strings with Power Fx in Power Automa...

on:

Interpolated strings with Power Fx in Power Automa...

-

Joseph_Fadero

on:

How to implement approval in Teams using Adaptive ...

on:

How to implement approval in Teams using Adaptive ...

- MihirL on: Send HTTP Request to SharePoint and get Response u...

- AKA_Faceman on: Send Microsoft Form Attachments to an Email - End ...

- Bennykil on: Modifying M-code in Power Query in Power Automate ...