- Microsoft Power Automate Community

- Welcome to the Community!

- News & Announcements

- Get Help with Power Automate

- General Power Automate Discussion

- Using Connectors

- Building Flows

- Using Flows

- Power Automate Desktop

- Process Mining

- AI Builder

- Power Automate Mobile App

- Translation Quality Feedback

- Connector Development

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read Only)

- Power Platform and Dynamics 365 Integrations (Read Only)

- Galleries

- Community Connections & How-To Videos

- Webinars and Video Gallery

- Power Automate Cookbook

- Events

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Community Blog

- Power Automate Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

- Microsoft Power Automate Community

- Galleries

- Power Automate Cookbook

- CSV to Dataset

CSV to Dataset

03-18-2022 21:41 PM - last edited 01-31-2024 09:37 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

CSV to Dataset

Template for converting large CSV files to JSON, then sending the data to a table or list.

This get data for CSV works even if there are commas in the CSV data. The only requirement is the the CSV file must follow the standard of placing double quotes “ , “ around any item eith in-data commas.

(If you can export as a semicolon, tab, or other delimited file, then you can probably just use a simpler method like Paul’s here: https://www.tachytelic.net/2021/02/power-automate-parse-csv/?amp)

For more information on the delimiter change piece, visit this previous post:

To make a flow to send any CSV data to a new Excel table with given CSV header names without any column mappings, check this template:

https://powerusers.microsoft.com/t5/Power-Automate-Cookbook/CSV-To-New-Excel-Table/m-p/1826096#M964

*Copying the template into an existing flow may create issues with expressions. You may need to copy the rest of your existing flow into the CSV template flow, then move the template scope where you need it.

Version 3 Uploaded 04/09/2022

(More minor fixes & additions.

I adjusted several expressions so it can now handle a few more scenarios with arrays in the CSV data. It should handle any array that doesn't include double quotes and any array that is all strings with double quotes, so ["String1", "String2", "String3"], but it will have issues if it is a mixed array with some double-quoted strings and some other values, for example ["String", 4, 03/05/2022, "String2"] won't work.

I also adjusted how the LineBreak setting is set-up so it now uses the /r/n for the LineBreak. I also provided this link in the flow so anyone can look up the right string for the decodeUriComponent expression(s) if they happen to have different LineBreak characters. This change also made it possible to differentiate between in-data line-breaks and CSV row line-breaks on the files I tested, so it should now replace the in-data line-breaks, like the multiple-choice fields some sites use, with semi-colons. That should make those records much easier to deal with & parse in later actions.

I also looked over a problem with in-data trailing commas. I added a line in the settings where anyone can toggle whether they want it to adjust for trailing OR leading commas in the data, it just can't handle both in one dataset. So if one column in one row has ",String1 String2" and another column in another row has "String 3 String4," then it will have errors.)

Google Drive Link To Download: https://drive.google.com/file/d/1X6BGhXWSnZtFgUK0v-5bHo7RuKSNjNtV/view?usp=sharing

(Older) Version 2 Uploaded 03/26/2022

(Adjusted the 1st Select input so it can now also deal with in-data commas in the 1st column. Added more lines to the 1st Select so it can now handle up to 50 columns with commas in them.)

Google Drive Link To Download: https://drive.google.com/file/d/1UVEewW9J1m9wvSppgFrf7rs3H3txSPUN/view?usp=sharing

Update 06/01/2022

Microsoft Power Platform & Paul Murana recently did a video demonstration of how to handle CSV & other files in dataflows: https://youtu.be/8IvHxRnwJ7Q

But it will only output to a Dataverse or Dataverse for teams table.

If you have any trouble with the standard legacy flow import method, you can also try an alternate Power Apps Solution import method here: Re: CSV to Dataset - Page 8 - Power Platform Community (microsoft.com)

Thanks for any feedback,

Please subscribe to my YouTube channel (https://youtube.com/@tylerkolota?si=uEGKko1U8D29CJ86).

And reach out on LinkedIn (https://www.linkedin.com/in/kolota/) if you want to hire me to consult or build more custom Microsoft solutions for you.

watch?v=-P-RDQvNd4A

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

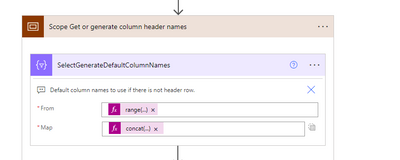

Where the OneDrive “Body” is.

You should use the same dynamic content wherever I put “InputData” and numbers for the other pieces.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

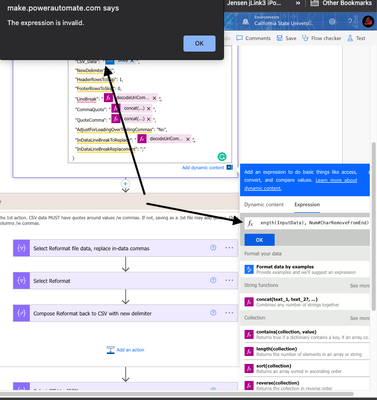

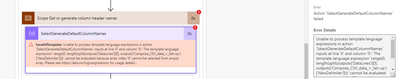

I get an error The expression is invalid.

This is where I'm entering the take() argument as a function, just before the OneDrive Body. And I was going to add the skip() function after the OneDrive Body, but that too gave me the same error.

If you get a chance, would it be possible for a screenshot with an example of your suggestion?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Those are placeholder names that you need to replace with your dynamic content & with the number of characters to take off the front or back.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am in a scenario that the CSV being delivered to me is using TAB as the delimiter.

Can I adjust your version 3.2 flow to accommodate a TAB delimiter? Any suggestions would be much appreciated.

Also if I am using a tab delimiter, could the text in one of my columns contain commas. or should I set the export to pull in that field with quotes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@mcnemare1

You may be more fortunate than many others who find this template seeking to avoid in-data commas, as TAB keys are usually much less likely to show up in the actual CSV data. So assuming you don't need to avoid in-data TABs, you should be able to use a simpler method of just splitting on TAB like what @Paulie78 shows in his CSV parsing template: https://www.tachytelic.net/2021/02/power-automate-parse-csv/

Just use a TAB key or a decodeuricomponent('%09') in the places where Paulie uses commas.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

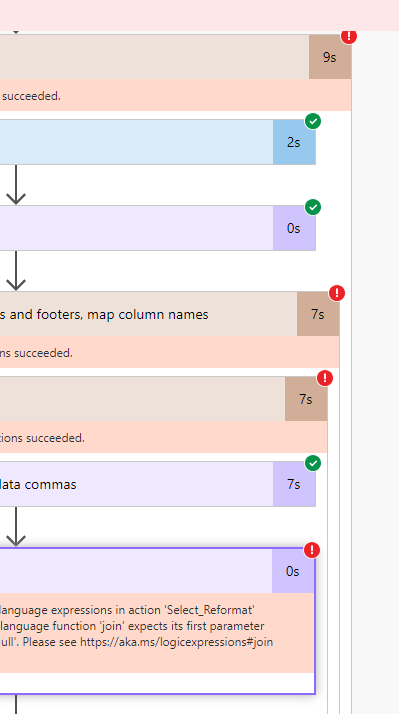

Hi, I think I'm having this error at Select Reformat step, I tried to copy the expression..

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

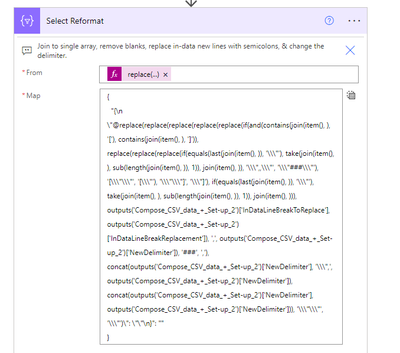

@biboy852

When you go to input a formula, make sure to click in the input area, get the pop-up, then select the Expressions tab in the pop-up and post the formula/expressions there.

The from field should be...

body('Select_Reformat_file_data,_replace_in-data_commas')

And the Map input should be...

replace(

replace(

replace(

replace(

replace(

if(and(contains(join(item(), ''), '['), contains(join(item(), ''), ']')),

replace(replace(replace(

if(equals(last(join(item(), '')), '"'), take(join(item(), ''), sub(length(join(item(), '')), 1)), join(item(), '')), '",,"', '"###"'), '[""', '["'), '""]', '"]'),

if(equals(last(join(item(), '')), '"'), take(join(item(), ''), sub(length(join(item(), '')), 1)), join(item(), ''))),

outputs('Compose_CSV_data_+_Set-up')['InDataLineBreakToReplace'], outputs('Compose_CSV_data_+_Set-up')['InDataLineBreakReplacement']),

',', outputs('Compose_CSV_data_+_Set-up')['NewDelimiter']),

'###', ','),

concat(outputs('Compose_CSV_data_+_Set-up')['NewDelimiter'], '",', outputs('Compose_CSV_data_+_Set-up')['NewDelimiter']), concat(outputs('Compose_CSV_data_+_Set-up')['NewDelimiter'], outputs('Compose_CSV_data_+_Set-up')['NewDelimiter'])),

'""', '"')- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

ok thank you 😀

I'm getting errors for this step, do I need to change anything here beside redoing the map? Thank you!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That should just have the expression there & nothing else. Remove the brackets, colon, quotes, comma,etc.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I changed it to normal