- Microsoft Power Automate Community

- Welcome to the Community!

- News & Announcements

- Get Help with Power Automate

- General Power Automate Discussion

- Using Connectors

- Building Flows

- Using Flows

- Power Automate Desktop

- Process Mining

- AI Builder

- Power Automate Mobile App

- Translation Quality Feedback

- Connector Development

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read Only)

- Power Platform and Dynamics 365 Integrations (Read Only)

- Galleries

- Community Connections & How-To Videos

- Webinars and Video Gallery

- Power Automate Cookbook

- Events

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Community Blog

- Power Automate Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

- Microsoft Power Automate Community

- Galleries

- Power Automate Cookbook

- Re: Excel Batch Create, Update, and Upsert

Re: Excel Batch Create, Update, and Upsert

01-13-2023 14:53 PM - last edited 01-13-2023 14:55 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Excel Batch Create, Update, and Upsert

Update & Create Excel Records 50-100x Faster

I was able to develop an Office Script to update rows and an Office Scripts to create rows from Power Automate array data. So instead of a flow creating a new action API call for each individual row update or creation, this flow can just send an array of new data and the Office Scripts will match up primary key values, update each row it finds, then create the rows it doesn't find.

And these Scripts do not require manually entering or changing any column names in the Script code.

• In testing for batches of 1000 updates or creates, it's doing ~2000 row updates or creates per minute, 50x faster than the standard Excel create row or update row actions at max 50 concurrency. And it accomplished all the creates or updates with less than 4 actions or only .4% of the standard 1000 action API calls.

• The Run Script code for processing data has 2 modes, the Mode 2 batch method that saves & updates a new instance of the table before posting batches of table ranges back to Excel & the Mode 1 row by row update calling on the Excel table.

The Mode 2 script batch processing method will activate for updates on tables less than 1 million cells. It does encounter more errors with larger tables because it is loading & working with the entire table in memory.

Shoutout to Sudhi Ramamurthy for this great batch processing addition to the template!

Code Write-Up: https://docs.microsoft.com/en-us/office/dev/scripts/resources/samples/write-large-dataset

Video: https://youtu.be/BP9Kp0Ltj7U

The Mode 1 script row by row method will activate for Excel tables with more than 1 million cells. But it is still limited by batch file size so updates on larger tables will need to run with smaller cloud flow batch sizes of less than 1000 in a Do until loop.

The Mode 1 row by row method is also used when the ForceMode1Processing field is set to Yes.

Be aware that some characters in column names, like \ / - _ . : ; ( ) & $ may cause errors when processing the data. Also backslashes \ in the data, which are usually used to escape characters in strings, may cause errors when processing the JSON.

Version 7 Note

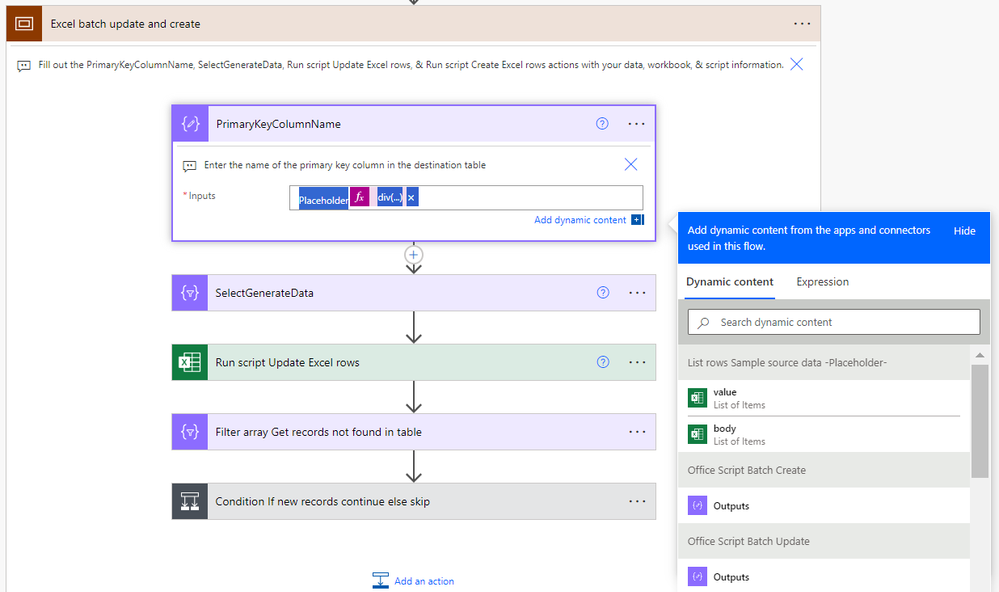

Diverting from what is shown in the video, I was able to remove almost all the flow actions in the "Match new and existing data key values then batch update" scope after replicating their functions in the scripts themselves. The flow now goes directly from the "SelectGenerateData" action to the "Run script Update Excel rows" action and the script handles matching up the UpdatedData JSON keys/field names to the destination table headers.

Also version 7 changes the primary key set up in the SelectGenerateData and changes the logic for skipping cell value updates & blanking out cell values.

Now the primary key column name from the destination table must be present in the SelectGenerateData action with the dynamic content for the values you want to match up to update. No more 'PrimaryKey' line, the update script will automatically reference the primary key column in the SelectGenerateData data based on the PrimaryKeyColumnName input on the update script action in the flow.

Now leaving a blank "" in the SelectGenerateData action will make that cell value in the Excel table empty, while leaving a null in the SelectGenerateData action will skip updating / not alter the cell value that currently exists in the Excel table there.

Version 6 which looks closer to the version shown in the video can still be accessed here: https://powerusers.microsoft.com/t5/Power-Automate-Cookbook/Excel-Batch-Create-Update-and-Upsert/m-p...

Version 7 Set-Up Instructions

Go to the bottom of this post & download the BatchExcel_1_0_0_xx.zip file. Go to the Power Apps home page (https://make.powerapps.com/). Select Solutions on the left-side menu, select Import solution, Browse your files & select the BatchExcel_1_0_0_xx.zip file you just downloaded. Then select Next & follow the menu prompts to apply or create the required connections for the solution flows.

Once imported, find the Batch Excel solution in the list of solution & click it to open the solution. Then click on the Excel Batch Upserts V7 item to open the flow. Once inside the flow, delete the PlaceholderValue Delete after import action.

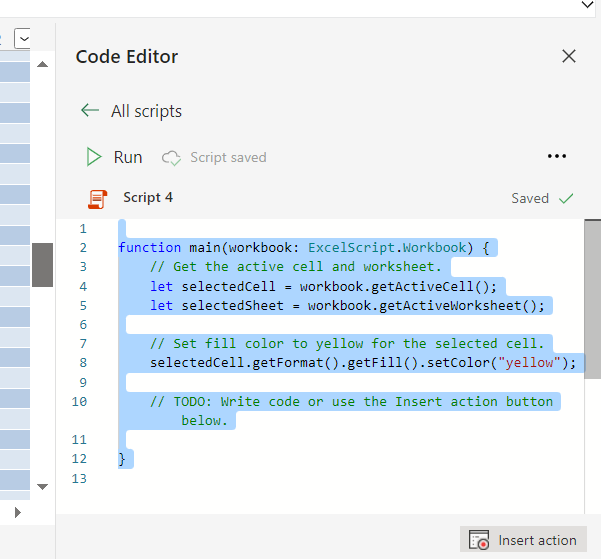

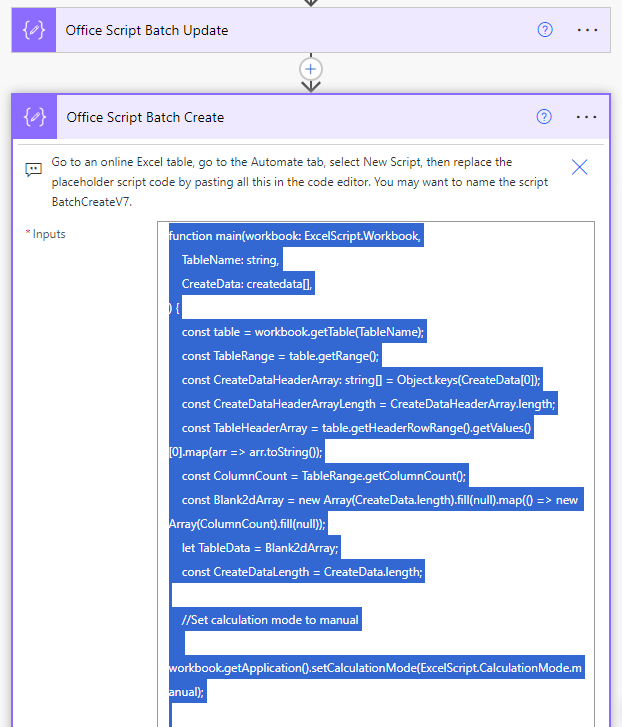

Open the Office Script Batch Update compose action to the BatchUpdateV7 script code. Select everything inside the compose input & control + C copy it to the clipboard.

Then find & open an Excel file in Excel Online. Go to the Automate tab, click on All Scripts & then click on New Script.

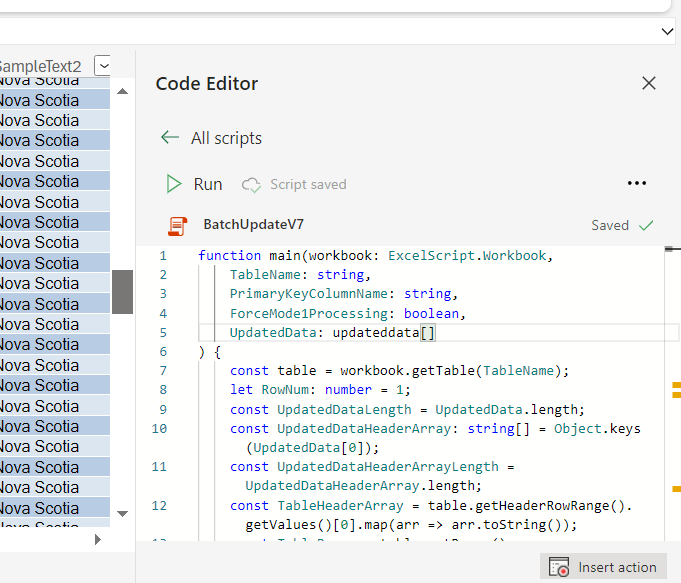

When the new script opens, select everything in the script & control + V paste the BatchUpdateV7 script from the clipboard into the menu. Then rename the script BatchUpdateV7 & save it. That should make the BatchUpdateV7 reference-able in the later Run script flow action.

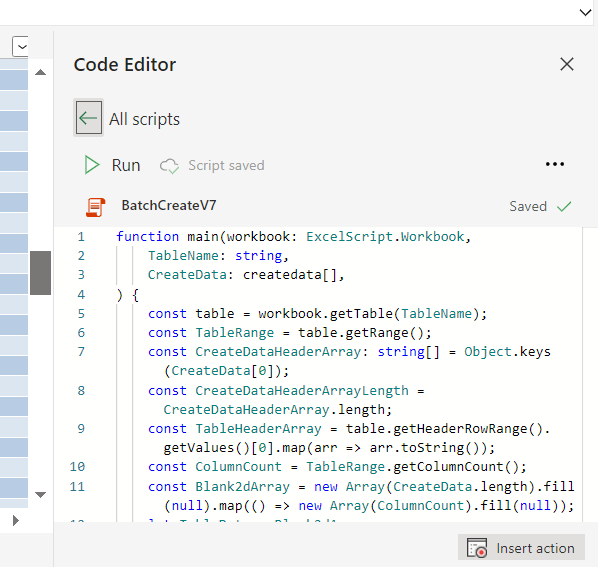

Do the same process to import the BatchCreateV7 script.

Then go to the List rows Sample source data action. If you are going to use an Excel table as the source of updated data, then you can fill in the Location, Document Library, File, & Table information on this action. If you are going to use a different source of updated data like SharePoint, SQL, Dataverse, an API call, etc, then delete the List rows Sample source data placeholder action & insert your new get data action for your other source of updated data.

Following that, go to the Excel batch update & create scope. Open the PrimaryKeyColumnName action, remove the placeholder values in the action input & input the column name of the unique primary key for your destination Excel table. For example, I use ID for the sample data.

Then go to the SelectGenerateData action.

If you replaced the List rows Sample source data action with a new get data action, then you will need to replace the values dynamic content from that sample action with the new values dynamic content of your new get data action (the dynamic content that outputs a JSON array of the updated data).

In either case, you will need to input the table header names from the destination Excel table on the left & the dynamic content for the updated values from the updated source action on the right. You MUST include the column header for the destination primary key column & the the primary key values from the updated source data here for the Update script to match up what rows in the destination table need which updates from the source data. All the other columns are optional / you only need to include them if you want to update their values.

After you have added all the columns & updated data you want to the SelectGenerateData action, then you can move to the Run script Update Excel rows action. Here add the Location, Document Library, File Name, Script, Table Name, Primary Key Column Name, ForceMode1Processing, & UpdatedData. You will likely need to select the right-side button to switch the UpdatedData input to a single array input before inserting the dynamic content for the SelectGenerateData action.

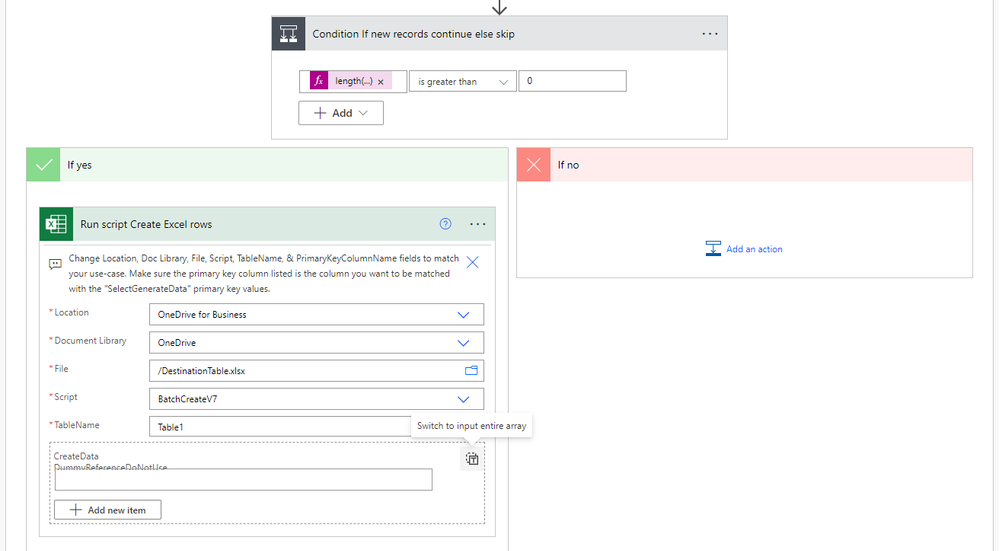

Then open the Condition If new records continue else skip action & open the Run script Create Excel rows action. In this run script action input the Location, Document Library, Script, Table Name, & CreateData. You again will likely need to select the right-side button to change the CreateData input to a single array input before inserting the dynamic content for the Filter array Get records not found in table output.

If you need just a batch update, then you can remove the Filter array Get records not found in table & Run script Create Excel rows actions.

If you need just a batch create, then you can replace the Run script Batch update rows action with the Run script Batch create rows action, delete the update script action, and remove the remaining Filter array Get records not found in table action. Then any updated source data sent to the SelectGenerateData action will just be created, it won't check for rows to update.

Thanks for any feedback,

Please subscribe to my YouTube channel (https://youtube.com/@tylerkolota?si=uEGKko1U8D29CJ86).

And reach out on LinkedIn (https://www.linkedin.com/in/kolota/) if you want to hire me to consult or build more custom Microsoft solutions for you.

Office Script Code

(Also included in a Compose action at the top of the template flow)

Batch Update Script Code: https://drive.google.com/file/d/1kfzd2NX9nr9K8hBcxy60ipryAN4koStw/view?usp=sharing

Batch Create Script Code: https://drive.google.com/file/d/13OeFdl7em8IkXsti45ZK9hqDGE420wE9/view?usp=sharing

(ExcelBatchUpsertV7 is the core piece, ExcelBatchUpsertV7b includes a Do until loop set-up if you plan on updating and/or creating more than 1000 rows on large tables.)

watch?v=HiEU34Ix5gA

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Here you go with another example that leverages the same approach:

And I can confirm that output from the Select action is a 1-dim array (I am already using this trick in other flows):

Aside from nesting this comparison into an Apply to Each loop (loop thru records from array 1 and compare each occurrence with each occurrence from array 2, or loop thru records from array 1 and use a "contains" filter to check presence of records from array 2), I don't see other options. This "apply to each" would be IMHO less efficient than one single Filter action with "contains" comparison criteria.

But I'm curious to hear your thoughts 🙂

Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

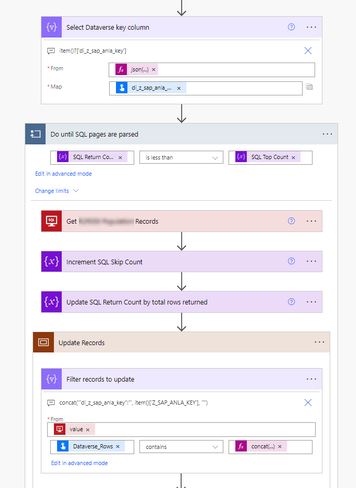

If z zap ania corresponds to a key field in your SQL table, then you should be able to do a Filter array where

Select Dataverse key column body contains item()[‘InsertCorrespondingColumnHere’]

No concatenate

Unless I’m misunderstanding what you are asking.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That's exactly what I tried.

But what you suggested earlier is:

"If you can get the body items into an array, then you can set it to something like

CreatedArrayContent equals item()

And that will be much more efficient because it is comparing against a few items of an array, rather than searching an entire string output of all the columns / data."

My question was about how I could use "equals" and not "contains" in my comparison.

But nevermind, I have something working with "contains" that's taking a few minutes for 100K+ records, so all good.

Thanks for your help!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, sorry I was switching between too many different tasks & missed some details.

Your scenario can’t use equals. It still needs contains because it will search for the full, exact item within an array of items. That is more efficient than searching an entire string of all the array items each time.

So

Search 3 times to find String 2 in

[String1, String2, String3]

Or less efficiently search like all 24 characters for “String1”

Not sure if that’s the exact computer science of it, but it’s what I’ve guessed / intuited is happening after reading related things & working with these tools for a while.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Tyler,

I am now playing with your (brilliant) Excel Batch solution, and found out a couple of bugs that I wanted to share with you:

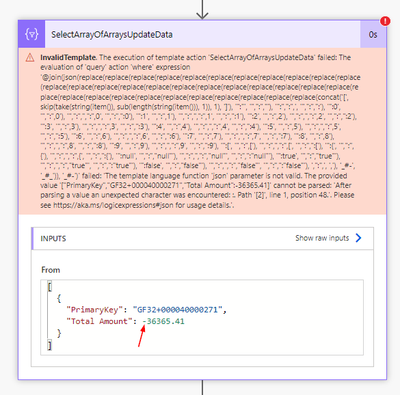

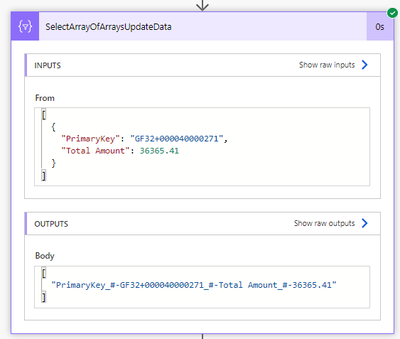

- The json() expression in SelectArrayOfArraysUpdateData action does not support negative numbers:

- I also noticed that your flow requires the primary key column to be named PrimaryKey, otherwise the following action fails:

Thanks for your help!

Fred

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for reviewing the template Fred.

I’ll have to take a look & see if there is a way to also handle the negative numbers.

As for the primary key expression, the PrimaryKey label in the ‘SelectGenerateUpdateData’ action is not supposed to change. Users should input whatever value or combination of values that make up the primary key in there.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Fred_S

I'm still able to create & update with negative numbers on the newer V5 template...

I think I can understand what happened here. My earlier flow, script, & video likely showed using integers or the int( ) expression to send numbers to the worksheet. While making the newer version, I found I didn't need to convert to any datatypes beyond strings, and it would still output to the worksheet as the intended data-type as long as the receiving columns are already pre-set for that data type.

So you should be able to set that column as a Number column on your destination Excel table, then use the plain dynamic content values in the GenerateUpdateData mapping.

Was that the issue?

Please share if you get it working on your side, thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

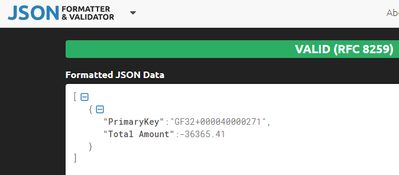

Your first screenshot gave me some hint: all your numeric values are quoted, whereas in my case they are not.

While the following is still considered as a valid JSON payload:

it seems that json() function cannot parse it correctly.

My source is a Dataverse table, and the source field holding total amount is "di_z_total".

item()?['di_z_total'] would give me 123, not "123". Or -123.45, and not "-123.45".

However, after reviewing the Dataverse payload, I found this:

"di_z_total@OData.Community.Display.V1.FormattedValue":"583,182.50","di_z_total@odata.type":"#Decimal","di_z_total":583182.5

and using the field name in red was the trick:

item()?['di_z_total@OData.Community.Display.V1.FormattedValue']

Now all my source values are quoted and Excel would interpret them correctly based on the preset datatype for each column.

This one really took me hours to figure out, but I am glad to have finally solved it 🙂

Now I can see that in my target Excel table, it creates 2 empty rows:

This is my template:

And this is the outcome:

Any clue why I have those 2 extra blank rows?

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Fred_S

Thanks for the Dataverse work-around. And if anyone is facing a similar issue with any source, they can also use the string( ) expression for the item piece on the GenerateUpdateData mapping, for example...

string(item()?['InputFieldNameHere'])

And the blank rows are the best option I could think of with the time available to avoid an error when the table starts completely empty. It isn't an issue if you are updating or creating on a table with data already in it.

If you want to avoid those when filling a new template Excel table, you can make your template Excel table start with a set row with a set primary key value that you can then remove after the data is added. So for example you could set the initial row with a number id primary key field set to 0, then after the batch actions add a delete row action to delete the row where id = 0.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Tyler,

I wanted to provide some feedback after my experiments:

- First of all, your flow works very well, thank you for putting it together for us!

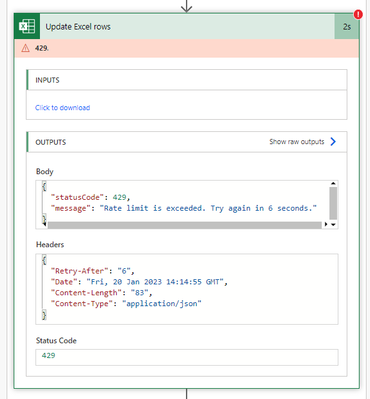

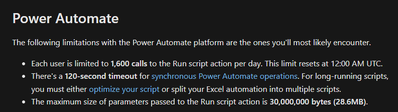

- Excel Online action can throttle: I tried to update 3 different files at a time by pulling data from Dataverse, and it turns out the Run Script action throttles pretty quickly. I saw that you covered this in the past. I can confirm though this is really a limitation of the connector, and not a timeout issue. The way I resolved it was simply by serializing my upserts. It takes longer, but it works 🙂

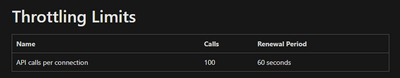

- Not only does this action has throttling limits, it also has some daily limit:

The full error says:

You've reached your daily limit of calls to the 'Run script' action. Your quota will be reset at 1/21/2023 00:00 UTC.

Those limits are documented here: Platform limits and requirements with Office Scripts - Office Scripts | Microsoft Learn.

Screenshot of this page below:

And this page provides details about throttling limits: Excel Online (Business) - Connectors | Microsoft Learn

I hope that will help others.

Thanks again for sharing your work with us!

Fred