- Microsoft Power Automate Community

- Welcome to the Community!

- News & Announcements

- Get Help with Power Automate

- General Power Automate Discussion

- Using Connectors

- Building Flows

- Using Flows

- Power Automate Desktop

- Process Mining

- AI Builder

- Power Automate Mobile App

- Translation Quality Feedback

- Connector Development

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read Only)

- Power Platform and Dynamics 365 Integrations (Read Only)

- Galleries

- Community Connections & How-To Videos

- Webinars and Video Gallery

- Power Automate Cookbook

- Events

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Community Blog

- Power Automate Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

- Microsoft Power Automate Community

- Galleries

- Power Automate Cookbook

- Excel Batch Create, Update, and Upsert

Excel Batch Create, Update, and Upsert

06-13-2022 09:31 AM - last edited 03-29-2024 15:51 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Excel Batch Create, Update, and Upsert

Update & Create Excel Records 50-100x Faster

I was able to develop an Office Script to update rows and an Office Scripts to create rows from Power Automate array data. So instead of a flow creating a new action API call for each individual row update or creation, this flow can just send an array of new data and the Office Scripts will match up primary key values, update each row it finds, then create the rows it doesn't find.

And these Scripts do not require manually entering or changing any column names in the Script code.

• In testing for batches of 1000 updates or creates, it's doing ~2000 row updates or creates per minute, 50x faster than the standard Excel create row or update row actions at max 50 concurrency. And it accomplished all the creates or updates with less than 4 actions or only .4% of the standard 1000 action API calls.

• The Run Script code for processing data has 2 modes, the Mode 2 batch method that saves & updates a new instance of the table before posting batches of table ranges back to Excel & the Mode 1 row by row update calling on the Excel table.

The Mode 2 script batch processing method will activate for updates on tables less than 1 million cells. It does encounter more errors with larger tables because it is loading & working with the entire table in memory.

Shoutout to Sudhi Ramamurthy for this great batch processing addition to the template!

Code Write-Up: https://docs.microsoft.com/en-us/office/dev/scripts/resources/samples/write-large-dataset

Video: https://youtu.be/BP9Kp0Ltj7U

The Mode 1 script row by row method will activate for Excel tables with more than 1 million cells. But it is still limited by batch file size so updates on larger tables will need to run with smaller cloud flow batch sizes of less than 1000 in a Do until loop.

The Mode 1 row by row method is also used when the ForceMode1Processing field is set to Yes.

Be aware that some characters in column names, like \ / - _ . : ; ( ) & $ may cause errors when processing the data. Also backslashes \ in the data, which are usually used to escape characters in strings, may cause errors when processing the JSON.

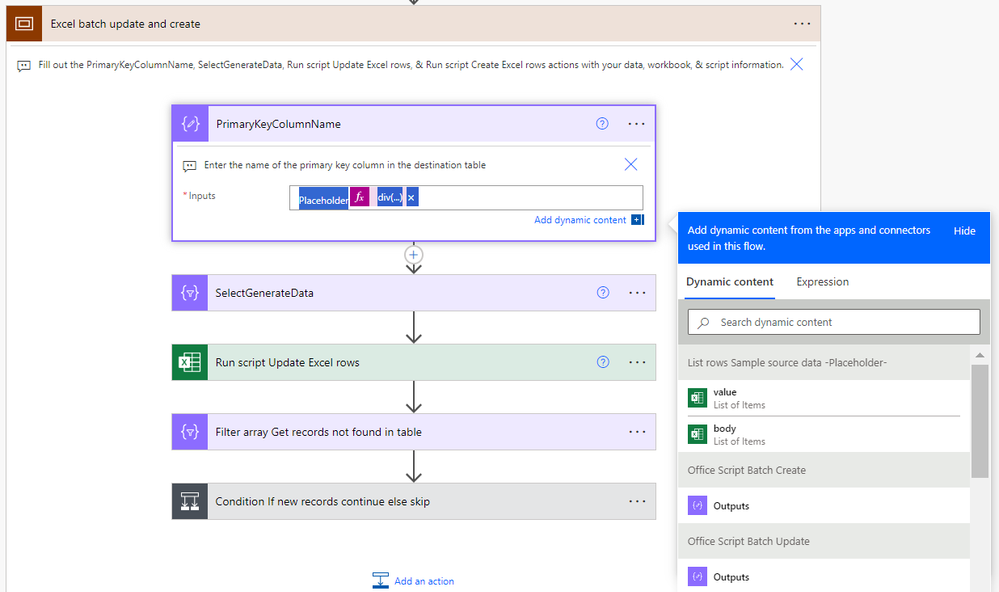

Version 7 Note

Diverting from what is shown in the video, I was able to remove almost all the flow actions in the "Match new and existing data key values then batch update" scope after replicating their functions in the scripts themselves. The flow now goes directly from the "SelectGenerateData" action to the "Run script Update Excel rows" action and the script handles matching up the UpdatedData JSON keys/field names to the destination table headers.

Also version 7 changes the primary key set up in the SelectGenerateData and changes the logic for skipping cell value updates & blanking out cell values.

Now the primary key column name from the destination table must be present in the SelectGenerateData action with the dynamic content for the values you want to match up to update. No more 'PrimaryKey' line, the update script will automatically reference the primary key column in the SelectGenerateData data based on the PrimaryKeyColumnName input on the update script action in the flow.

Now leaving a blank "" in the SelectGenerateData action will make that cell value in the Excel table empty, while leaving a null in the SelectGenerateData action will skip updating / not alter the cell value that currently exists in the Excel table there.

Version 6 which looks closer to the version shown in the video can still be accessed here: https://powerusers.microsoft.com/t5/Power-Automate-Cookbook/Excel-Batch-Create-Update-and-Upsert/m-p...

Version 7 Set-Up Instructions

Go to the bottom of this post & download the BatchExcel_1_0_0_xx.zip file. Go to the Power Apps home page (https://make.powerapps.com/). Select Solutions on the left-side menu, select Import solution, Browse your files & select the BatchExcel_1_0_0_xx.zip file you just downloaded. Then select Next & follow the menu prompts to apply or create the required connections for the solution flows.

Once imported, find the Batch Excel solution in the list of solution & click it to open the solution. Then click on the Excel Batch Upserts V7 item to open the flow. Once inside the flow, delete the PlaceholderValue Delete after import action.

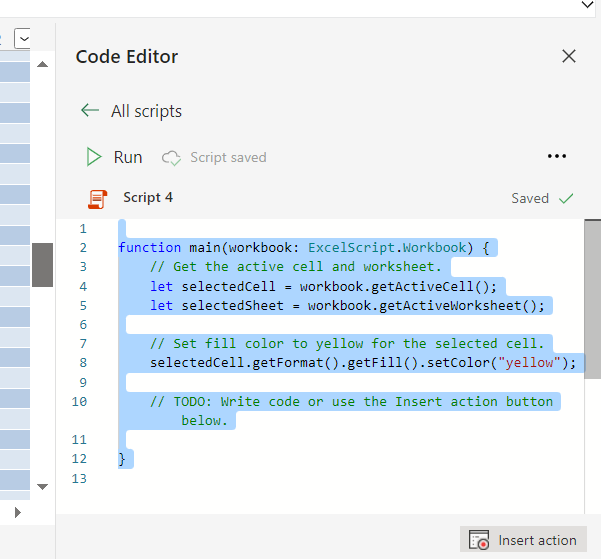

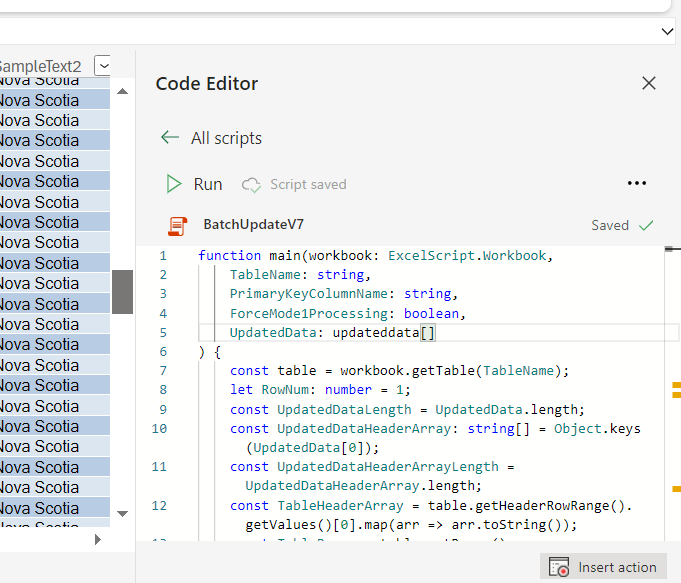

Open the Office Script Batch Update compose action to the BatchUpdateV7 script code. Select everything inside the compose input & control + C copy it to the clipboard.

Then find & open an Excel file in Excel Online. Go to the Automate tab, click on All Scripts & then click on New Script.

When the new script opens, select everything in the script & control + V paste the BatchUpdateV7 script from the clipboard into the menu. Then rename the script BatchUpdateV7 & save it. That should make the BatchUpdateV7 reference-able in the later Run script flow action.

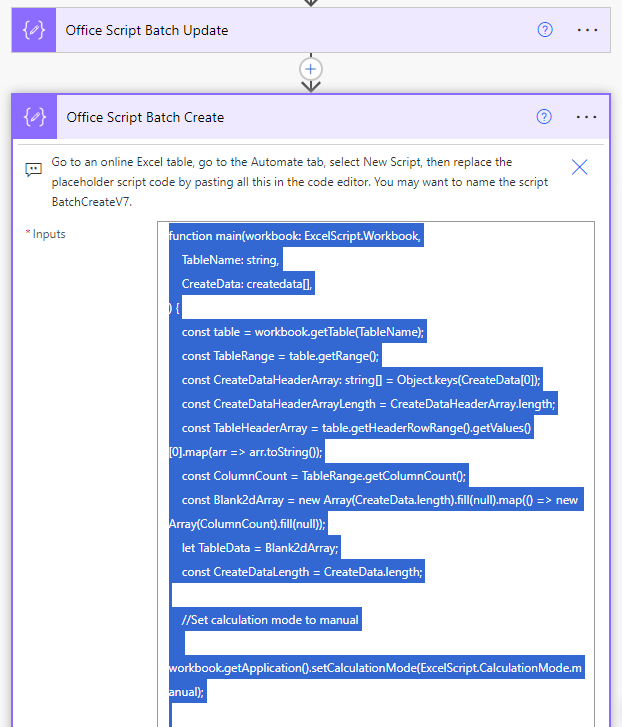

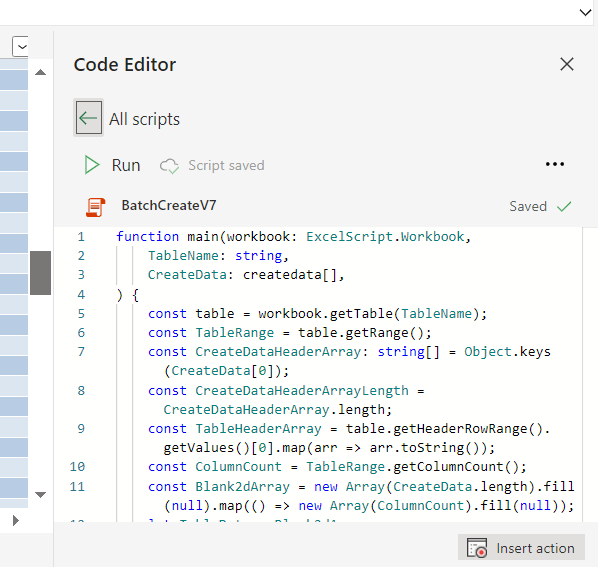

Do the same process to import the BatchCreateV7 script.

Then go to the List rows Sample source data action. If you are going to use an Excel table as the source of updated data, then you can fill in the Location, Document Library, File, & Table information on this action. If you are going to use a different source of updated data like SharePoint, SQL, Dataverse, an API call, etc, then delete the List rows Sample source data placeholder action & insert your new get data action for your other source of updated data.

Following that, go to the Excel batch update & create scope. Open the PrimaryKeyColumnName action, remove the placeholder values in the action input & input the column name of the unique primary key for your destination Excel table. For example, I use ID for the sample data.

Then go to the SelectGenerateData action.

If you replaced the List rows Sample source data action with a new get data action, then you will need to replace the values dynamic content from that sample action with the new values dynamic content of your new get data action (the dynamic content that outputs a JSON array of the updated data).

In either case, you will need to input the table header names from the destination Excel table on the left & the dynamic content for the updated values from the updated source action on the right. You MUST include the column header for the destination primary key column & the the primary key values from the updated source data here for the Update script to match up what rows in the destination table need which updates from the source data. All the other columns are optional / you only need to include them if you want to update their values.

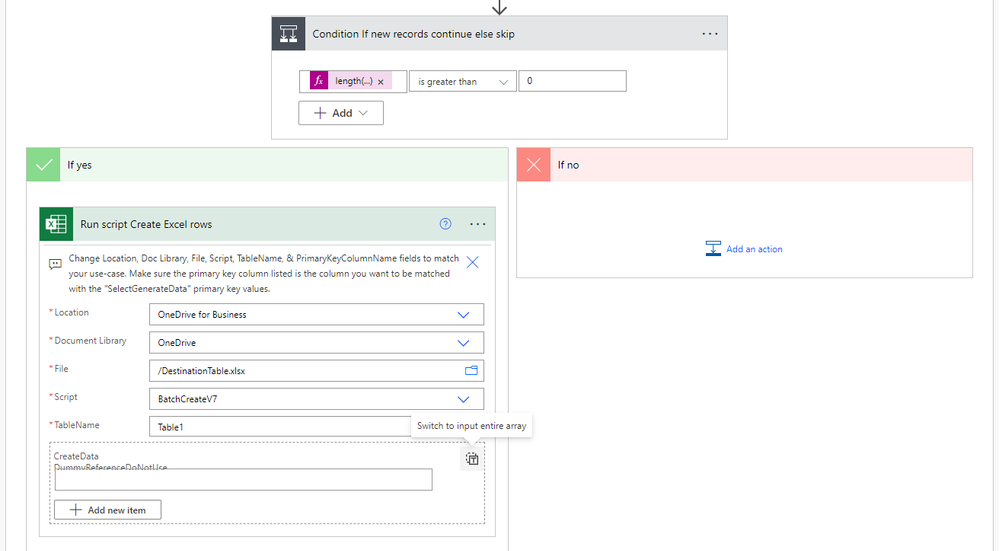

After you have added all the columns & updated data you want to the SelectGenerateData action, then you can move to the Run script Update Excel rows action. Here add the Location, Document Library, File Name, Script, Table Name, Primary Key Column Name, ForceMode1Processing, & UpdatedData. You will likely need to select the right-side button to switch the UpdatedData input to a single array input before inserting the dynamic content for the SelectGenerateData action.

Then open the Condition If new records continue else skip action & open the Run script Create Excel rows action. In this run script action input the Location, Document Library, Script, Table Name, & CreateData. You again will likely need to select the right-side button to change the CreateData input to a single array input before inserting the dynamic content for the Filter array Get records not found in table output.

If you need just a batch update, then you can remove the Filter array Get records not found in table & Run script Create Excel rows actions.

If you need just a batch create, then you can replace the Run script Batch update rows action with the Run script Batch create rows action, delete the update script action, and remove the remaining Filter array Get records not found in table action. Then any updated source data sent to the SelectGenerateData action will just be created, it won't check for rows to update.

Thanks for any feedback,

Please subscribe to my YouTube channel (https://youtube.com/@tylerkolota?si=uEGKko1U8D29CJ86).

And reach out on LinkedIn (https://www.linkedin.com/in/kolota/) if you want to hire me to consult or build more custom Microsoft solutions for you.

Office Script Code

(Also included in a Compose action at the top of the template flow)

Batch Update Script Code: https://drive.google.com/file/d/1kfzd2NX9nr9K8hBcxy60ipryAN4koStw/view?usp=sharing

Batch Create Script Code: https://drive.google.com/file/d/13OeFdl7em8IkXsti45ZK9hqDGE420wE9/view?usp=sharing

(ExcelBatchUpsertV7 is the core piece, ExcelBatchUpsertV7b includes a Do until loop set-up if you plan on updating and/or creating more than 1000 rows on large tables.)

watch?v=HiEU34Ix5gA

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You got the JSON part figured out & it’s now giving a 502 gateway error code on the Update Script action?

I still don’t see anything really unusual about your data.

There are a few columns with potentially mixed data types like ones that may have date numbers & “N/A” string values.

But I’m not immediately thinking of how that may cause an error.

Maybe in general you could try testing it with different columns, & if some work & others don’t, then you could continue to narrow down where the issue is that way.

At this point, I’d probably need to get on a video call to figure out what is going wrong, but I’m a little busy with a larger Power App build with my employer at the moment.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As far as I know, I have never had any problems with JSON. But it is true that I am now getting the 504 & 502 errors (alternately).

In the meantime, I have downloaded the entire flow again and implemented it in a (much) smaller (test) file.

But unfortunately, no success this time 😕

Now this is the problem I am facing:

The "Run script Update Excel rows" action if failing

We were unable to run the script. Please try again.

Runtime error: Line 8: Cannot read property 'getRangeBetweenHeaderAndTotal' of undefined

client

Edit:

Nevermind, after selecting the table again in the destination file it works.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Line 8 is calling the table through the table name given in the Power Automate Run Script action, then counting the number of rows after the header.

Given it is the 1st thing the script does & is not a complex function, I’m not sure what the problem would be on the coding side.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Firstly, thank you for your time in creating the scripts and flows.

I am having some issues integrating this into my own flows.

I was hoping to just use the BatchCreateV5 script to add data retrieved from a mysql database into an excel file, but it doesn't seem to be working.

The screenshot below seems to indicate that it was successful, but the table remains blank.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Here I am again. Sorry to bother you again.

I've started all over and configured everything from scratch, this finally seems to work.... partly anyway.

It seems that no new cells are added, only the existing ones are updated.

The source file has about 5500 rows. But, if I set the top count to 2000, it also stops at 2000 and does not continue batching.

Your help would again be greatly appreciated

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If only the smaller batch sizes are working, then you can try using the BatchUpsertV5.2b

the version b has a Do until loop so it keeps getting the next batch of data until it is done.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@takolota

Even smaller badge sizes aren't working. It actually only performs the 'update' part of the flow.

Although the batch create is always marked as successfully completed after a test.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have now implemented version B.

It works, but...

Only when the batch size is at 100 (or lower). But then the flow takes +- 50 minutes.

When the batch size is bigger (250, 300 or 500), the flow fails during the "Run script Update Excel rows" action and gives below error code.

We were unable to run the script. Please try again.\nOffice JS error: Line 171: Range getCell: The request failed with status code of 404, error code ResourceNotFound and the following error message: Invalid version

I don't mind the long duration, but is there any way to make it go faster anyway? Or is the batch size the main cause of this?

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Yutao

I think I'm out of my depth on this issue with @Dark4499 because the limitation may be at a deeper level of abstraction. I see you have helped people with this error in similar programs: https://powerusers.microsoft.com/t5/Using-Flows/Trying-to-use-Office-Scripts-and-Flow-to-copy-from-o...

I don't see anything too out of the ordinary with his data, other than the fact he is updating 45 columns in a 90+ column table.

I have been able to update 100s of rows in a 200+ column table on my end. So I'm not sure where the difference is in the data sets or in the runs of this flow.

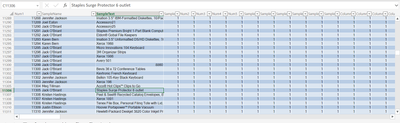

Example of the quick test data I generated for 200+ columns & 25,000 rows...

Maybe it is somehow an issue with the actual character count of cells in the receiving table or in the input array payload?

I'm just not really understanding what is limiting him on this.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When trying to import your ExcelBatchUpsertV5.2.zip I am getting an error on the resource OpExOptimization@ghsc-psm.org it si related to Excel Online Business) connection

because of this the flow will not import. what is this resource?