- Microsoft Power Automate Community

- Welcome to the Community!

- News & Announcements

- Get Help with Power Automate

- General Power Automate Discussion

- Using Connectors

- Building Flows

- Using Flows

- Power Automate Desktop

- Process Mining

- AI Builder

- Power Automate Mobile App

- Translation Quality Feedback

- Connector Development

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read Only)

- Power Platform and Dynamics 365 Integrations (Read Only)

- Galleries

- Community Connections & How-To Videos

- Webinars and Video Gallery

- Power Automate Cookbook

- Events

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Community Blog

- Power Automate Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

- Microsoft Power Automate Community

- Galleries

- Power Automate Cookbook

- Re: import csv

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

import csv

Title: Import CSV File

I have created a csv import 2.0

It is smaller and simpler.

Find it here

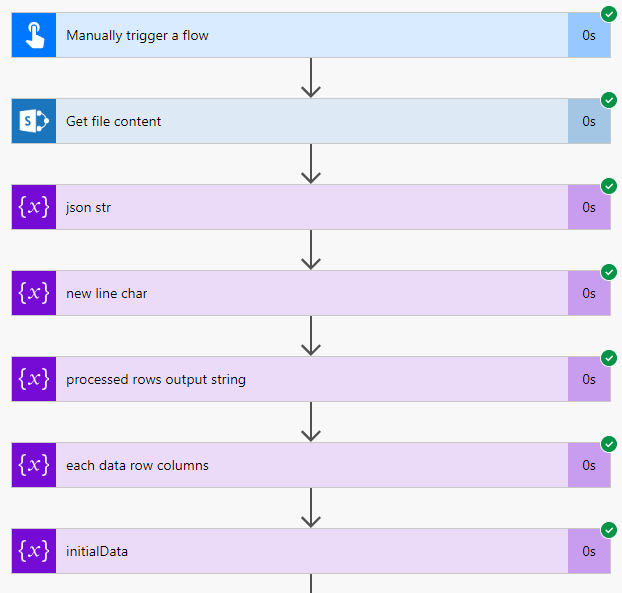

Description: This flow allows you to import csv files into a destination table.

Actually I have uploaded 2 flows. One is a compact flow that just removes the JSON accessible dynamic columns.

You can easily access the columns in the compact version by using the column indexes . . . [0],[1],[2], etc...

Compact Version:

This step is where the main difference is at. We don't recreate the JSON, we simply begin to write our data columns by accessing them via column indexes (apply to each actions). This will cut down in the run time by even another 30% to 40%.

Detailed Instructions:

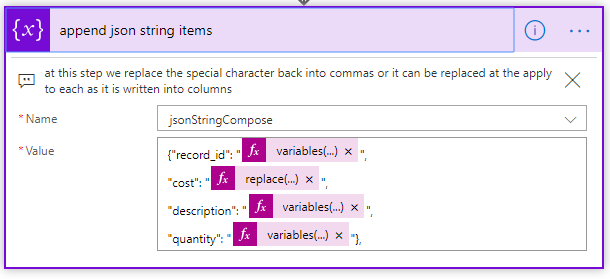

** If you need to add or remove columns you can, follow the formatting and pattern in the "append json string items" and anywhere else columns are accessed by column indexes with 0 being first column -- for example "variables('variable')[0]" or "split(. .. . )[0]", etc.. .

Import the package attached below into your own environment.

You only need to change the action where I get the file content from, such as sharepoint get file content, onedrive get file content.

My file in the example is located on premise.

When you change the get file content action it will remove any references to it, this is where it belongs though.

Please read the comments within the flow steps, they should explain the using this flow.

Be sure to watch where your money columns or other columns with commas fall as they have commas replaced by asterisk in this flow, when you write the data you need to find the money columns to remove the commas since it will most likely go into a currency column.

Also check the JSON step carefully since it will let you access your columns dynamically in the following steps.

You will need to modify the JSON schema to match your column names and types, you should be able to see where the column names and types are within the properties brackets.

In this step of you JSON notice my values have quotes because mine are all string type, even the cost.

If you have number types remove the quotes (around the variables) at this step where the items are appended and you probably need to replace the comma out of the money value (replace with nothing).

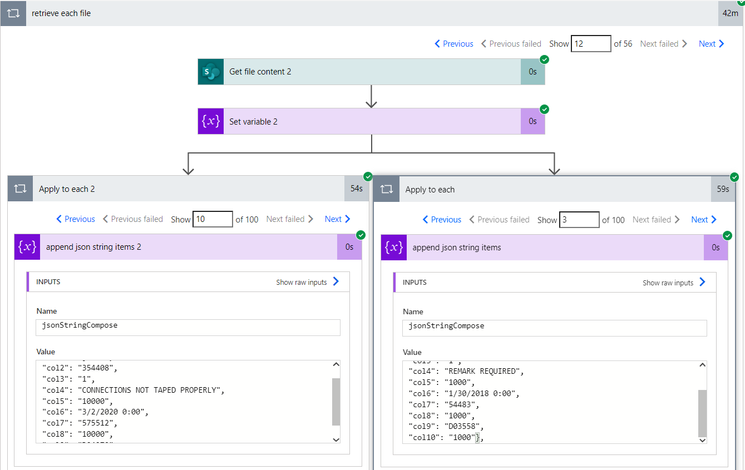

This step will control how many records you are going to process.

The flow is set up to process 200 rows per loop and should not be changed or due to the nesting of loops it may go over the limit.

It will detect how many loops it needs to perform. So if you have 5,000 rows it will loop 25 times.

You should change the count though. Make sure the count is over the number of loops, the count just prevents it from looping indefinitely.

Note: This flow can take a long time to run, the more rows with commas in the columns the longer.

9-25-20 - A new version of the flow is available, it is optimized and should run 40% to 50% faster.

Questions: If you have any issues running it, most likely I can figure it out for you.

Anything else we should know: You can easily change the trigger type to be scheduled, manual, or when a certain event occurs.

The csv file must be text based as in saved as plain text with a csv extension or txt in some cases.

Note: any file with csv extension will probably show with an excel icon.

The best way to find out if it will work is to right click it on your computer and choose to open it in word pad or note pad. If you see the data it will work. If you see different characters it is an excel based file and won't work.

An excel based file is of type object and can't be read as a string in this flow.

You can easily convert it by saving excel file as CSV UTF-8 comma delimited in save as options.

It should also work on an excel file without table format as long as you convert to csv the same way and same extension requirement.

** If the file is on sharepoint go ahead and save as csv utf-8 and then change the extension to .txt or sharepoint will force it to open as an actual excel spreadsheet file.

you may also need to use .txt extension from other "get file contents" besides sharepoint. I know for a fact on premise can stay as .csv extension.

My sample run of 12000 rows, this sample has 10 columns, you can have any number of columns.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is just my sample run.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@juresti, Nice work! Thank you for sharing this with the community!

I was able to customize your Flow (in rather short order) to allow the upload of CSV files from PowerApps. I've attached the customized Flow and a sample PowerApp below.

Take care!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Wow @seadude - nice work. I have bookmarked this thread with the intention of getting back to it to do what you just did for me - CSV upload via Power Apps!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@seadudeyour sir are my personal hero. This solution saved me at least a few days of searching and testing and failing.

Thank you so much! 💪

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

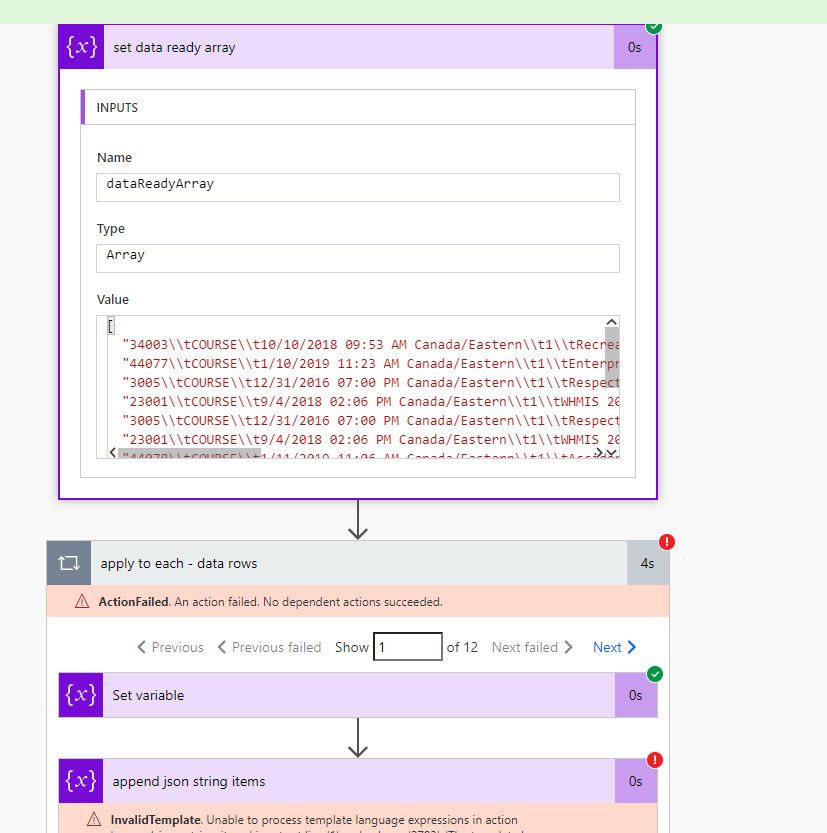

@juresti I'm having a problem getting the array data ready. For some reason the commas are being removed. I've setup the array

Any help would be great!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, What does your Get File Content look like?

Your screen shot looks like your file is tab delimited - \t

I have a flow that works with tab delimited, it is much shorter since it does not involve handling the commas.

Or you can save as csv by opening excel first then opening your file through excel.

You will be able to see if you have commas by looking at your Get File Content.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

My data was coming through with spaces in some cases and commas in others, why I don't know.

I've amended the compose script to sub to tab with a comma, and everything seems to be working now.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @juresti ,

Thanks so much for sharing this. I've been able to get the csv version to work. My problem is the csv im running it on has lots of rows and lots of columns and lots of those cells contains commas so the flow takes a very very very long time to run on the entire dataset - too long.

The flow runs quickly if I remove the problem rows and the search for comma elements of the flow. I can get a tab delimited (.tsv) file instead of the csv from my system so I'm thinking that solve my problem as it seems the search, replace on commas is what is taking so much time.

Would you be able to share the .tsv version of the flow?

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @charliecantflow

Yes, I'll upload the tab delimited version of the flow to a new recipe post.

I just need to prepare it for import and check all the comments in the actions.

I'll post it very soon.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @juresti .

I managed to get the tab delimited version working myself by adjusting replace expression to remove quotes from the initilData variable and the eachDataRow variable to split on "\t" instead of the comma. The flow runs in 10 minutes now and works prefectly so thanks again.

Still interested to see your working .tsv version to double check mine against it, in case i missed something.