- Microsoft Power Automate Community

- Welcome to the Community!

- News & Announcements

- Get Help with Power Automate

- General Power Automate Discussion

- Using Connectors

- Building Flows

- Using Flows

- Power Automate Desktop

- Process Mining

- AI Builder

- Power Automate Mobile App

- Translation Quality Feedback

- Connector Development

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read Only)

- Power Platform and Dynamics 365 Integrations (Read Only)

- Galleries

- Community Connections & How-To Videos

- Webinars and Video Gallery

- Power Automate Cookbook

- Events

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Community Blog

- Power Automate Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

- Microsoft Power Automate Community

- Forums

- Get Help with Power Automate

- AI Builder

- OCR input requirements

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OCR input requirements

Hello,

I am attempting to create an AI to recognise custom document information, and I am coming into multiple problems with OCR correctly identifying text. I realise this is a known problem and is being countermeasured with constant updates to the OCR model as per power automate community forum post 'Problem with Model recognising Zero and letter O'

I noticed one of the input requirements for OCR is ~8pt font text in order to Read. When analising the document type I am using train the AI model, at standard size, the font is ~8pt

Now, I see where this could be a problem with a document that employs raster imaging, in which the number of pixels in an image is predetermined, and when you zoom in the document appears to have lower resolution. The document type that I am attempting to analyse however appears to be utilising vector imaging, in which shapes are determined by a set of geometrical equations and resolution scales up the more you zoom in

My question; does the imaging technique for a document have an effect in the ability of OCR to correctly identify text? This issue is not as prevalent with larger style letters within the same document (same imaging technique as smaller style letters)

Appreciate the help!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @JWall - thanks for the question and the detailed analysis.

A few things we can try to see if you see any impact:

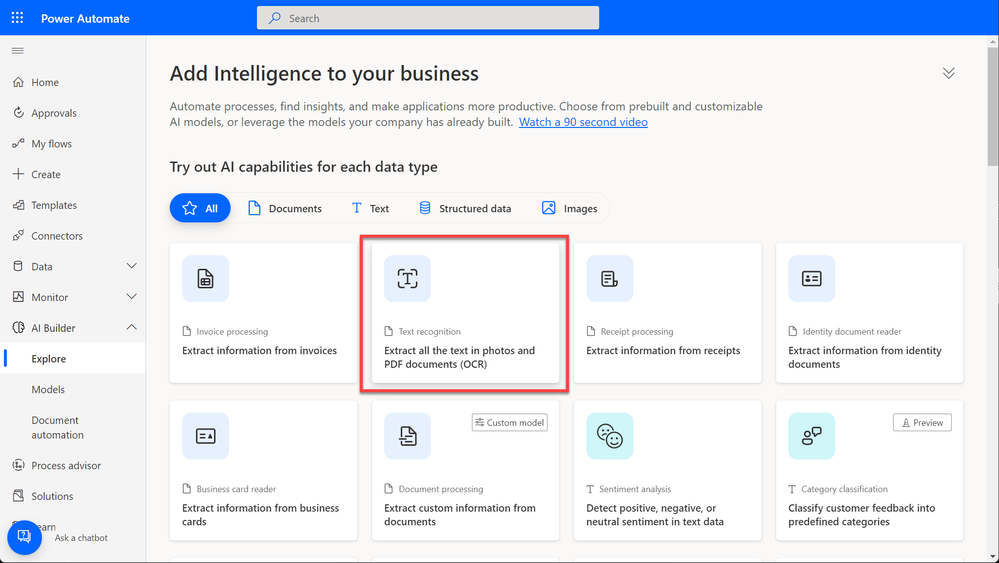

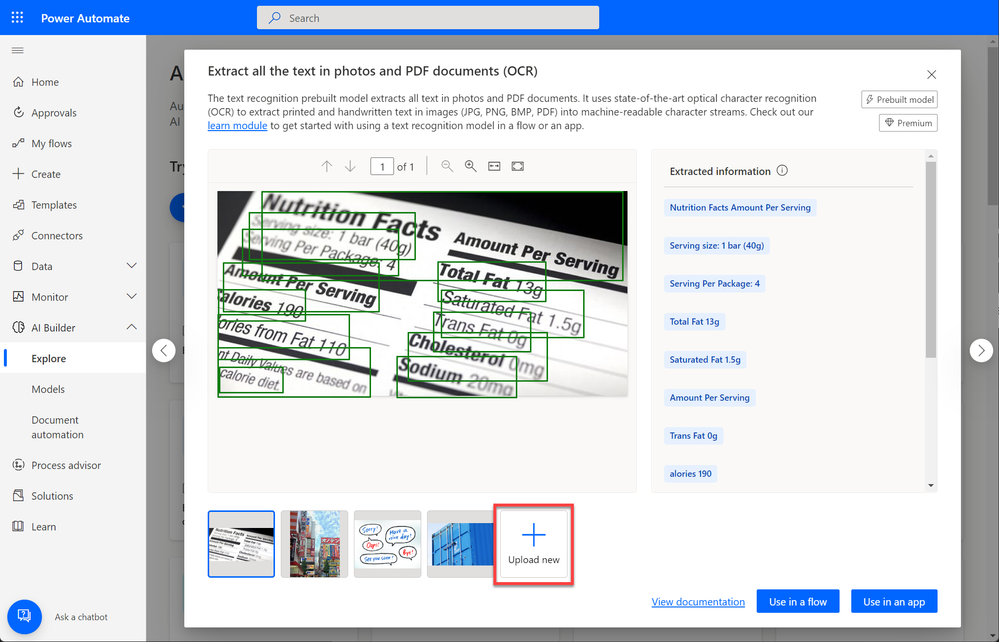

- If you navigate to the homepage of AI Builder (https://aka.ms/tryaibuilder) --> Select Text recognition / Extract all the text in photos and PDF documents (OCR). --> Upload new. Do you get the data extracted as you would expect? The text recognition model has been recently updated with OCR improvements.

- If you print the original PDF as a new PDF, and use the newly generated PDF on the text recognition model as the step before, do you see better results?

- If you try to transform one of the pages of the PDF document into an image (for example by taking a screenshot) and try here again with text recognition, do you notice any improvements?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @JoeF-MSFT

Thanks for the reply!

TL;DR - Trying the different methods appeared to have no impact to improving results. Not sure if there are any other methods/variables to test. A suggested feature I could make though would be to allow for manual entries in AI builder, where you still highlight the field in which you want the model to read, but if the model is unable to correctly read the text, then allow for an option to manually edit the read value for the field. Adding incorporation to the MS OCR recognition model to allow for improvement to that as well as improving end user AI models would greatly help the robustness and flexibility of AI builder.

Unfortunately I am unable to upload as detailed of a report as last time due to sensitive information, however; I did run through analysis on the situations you suggested. Utilising the 'extract all text in photos and PDF documents (OCR)' default model and uploading my original document, a version of the document that was printed as a new PDF -> SaveAs, and finally a version of the document that was taken as a screenshot and saved as a JPEG. I also tried a version of the document that was taken as a screenshot and saved as a PDF after seeing the results.

From a character count perspective, the results from what the AI reads are as follows:

| LEN(.pdforiginal) | 799 |

| LEN(.jpgss) | 920 |

| LEN(.pdfprint) | 799 |

| LEN(.pdfss) | 500 |

The original PDF and printing ->saveas PDF yielded the same results. Interestingly; the screenshot -> JPEG had the highest character count, while the screenshot -> PDF had the lowest character count.

Now when comparing to the actual data, I am unable to get an exact character count on the original PDF without meticulously counting it myself. What I can tell, is none of the AI read results correctly extracted the data as I would expect. For example the sample document has a total of 18 'A's in a table (similar to that of my previous post). None of the AI read results showed any amount of consistency in 1. Detecting a 'word', 2. Correctly identifying the 'word'. I think at best, surprisingly the jpeg version performed the best at the specific task correctly identifying ~8 'A's, but again; not to adequate result. The original PDF appeared to correctly identify the most amount of characters, which can help explain why the .jpg version identified more characters. A prime example of this would be the .jpg version identifying a column line as an 'l'.

Not sure if there are any other methods I could try to help troubleshoot or test for better methods. Other than patiently waiting for improvements to the character recognition AI model. A suggestion I could make though would be to allow for manual entries in AI builder, where you still highlight the field in which you want the model to read, but if the model is unable to correctly read the text, then allow for an option to manually edit the read field value. Adding incorporation to the MS OCR recognition model to allow for improvement to that as well as improving end user AI models would greatly help the robustness and flexibility of AI builder.

Appreciate the help, and let me know if you have any more thoughts. Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @JWall - I really appreciate the detailed investigations! And thanks for the feedback of allowing to provide feedback on the detected words while tagging the documents. This is something that indeed we don't have today.

I'm curious about those 'A's that are not detected. I understand that the documents contain sensitive information. Would it be possible to share just a screenshot of a word where an 'A' is not detected? Or maybe a partial screenshot of that word?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @JoeF-MSFT - Sure.

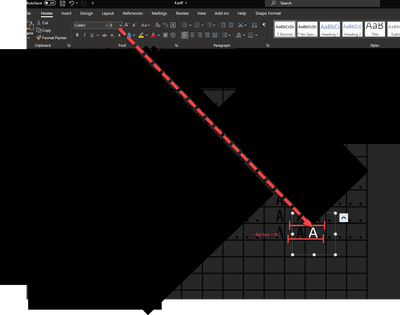

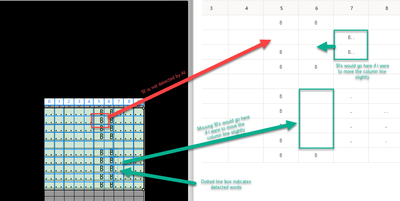

For this specific example I have an array of letters in a table. The letters aren't always 'A', nor are they always aligned in a linear layout pattern. The first screenshot helps show an example of a letter not being detected. All 'B's are detected by the OCR software except for the 'B' highlighted in red. The other 'B's that are either not showing up in the table on the right, or misplaced in the table on the right can easily be fixed by moving the column line, and are correctly identified as text by OCR. You may also notice that the array has '.' in some of the fields. Sometimes these are detected, and sometimes they are not and to which is varying degrees of success. I am not so concerned with this as '.' can also be treated as a blank in my use case, but you may find this interesting for your use.

This is a slightly different example where the OCR is detecting a column line as text ('|'). Sometimes OCR will detect column lines as 'l'.

Again, I want to highlight I presumed this problem to possibly be due to the OCR not being accurate with font sizes <=~8pt, but was curious as to if the image processing type would have an effect on that (vector vs raster) (these PDFs used were vector).

On an alternate note, I am going to attempt to work around the need to use OCR. These documents I was trying to use with OCR are all standard tables internal to our company. The thing is that they are 1. versions of pivot tables that make it easier for a human to read, 2. in PDF format and thus not as easy for a computer to read (the problem I was trying to solve with OCR). I am working with some people in my company to gain some additional information, but I would go to think there is some more raw data that are driving these PDF documents (strings in an array, tabular format or something like that), something which a computer may have a bit of an easier time reading. If this information exists and I can get access to it, then I should be able to skip over the process of running through OCR & creating an AI to recognise custom document information.

Anyways, I appreciate the help, and hopefully I was able to help with your inquiry.

Helpful resources

Community Roundup: A Look Back at Our Last 10 Tuesday Tips

As we continue to grow and learn together, it's important to reflect on the valuable insights we've shared. For today's #TuesdayTip, we're excited to take a moment to look back at the last 10 tips we've shared in case you missed any or want to revisit them. Thanks for your incredible support for this series--we're so glad it was able to help so many of you navigate your community experience! Getting Started in the Community An overview of everything you need to know about navigating the community on one page! Community Links: ○ Power Apps ○ Power Automate ○ Power Pages ○ Copilot Studio Community Ranks and YOU Have you ever wondered how your fellow community members ascend the ranks within our community? We explain everything about ranks and how to achieve points so you can climb up in the rankings! Community Links: ○ Power Apps ○ Power Automate ○ Power Pages ○ Copilot Studio Powering Up Your Community Profile Your Community User Profile is how the Community knows you--so it's essential that it works the way you need it to! From changing your username to updating contact information, this Knowledge Base Article is your best resource for powering up your profile. Community Links: ○ Power Apps ○ Power Automate ○ Power Pages ○ Copilot Studio Community Blogs--A Great Place to Start There's so much you'll discover in the Community Blogs, and we hope you'll check them out today! Community Links: ○ Power Apps ○ Power Automate ○ Power Pages ○ Copilot Studio Unlocking Community Achievements and Earning Badges Across the Communities, you'll see badges on users profile that recognize and reward their engagement and contributions. Check out some details on Community badges--and find out more in the detailed link at the end of the article! Community Links: ○ Power Apps ○ Power Automate ○ Power Pages ○ Copilot Studio Blogging in the Community Interested in blogging? Everything you need to know on writing blogs in our four communities! Get started blogging across the Power Platform communities today! Community Links: ○ Power Apps ○ Power Automate ○ Power Pages ○ Copilot Studio Subscriptions & Notifications We don't want you to miss a thing in the community! Read all about how to subscribe to sections of our forums and how to setup your notifications! Community Links: ○ Power Apps ○ Power Automate ○ Power Pages ○ Copilot Studio Getting Started with Private Messages & Macros Do you want to enhance your communication in the Community and streamline your interactions? One of the best ways to do this is to ensure you are using Private Messaging--and the ever-handy macros that are available to you as a Community member! Community Links: ○ Power Apps ○ Power Automate ○ Power Pages ○ Copilot Studio Community User Groups Learn everything about being part of, starting, or leading a User Group in the Power Platform Community. Community Links: ○ Power Apps ○ Power Automate ○ Power Pages ○ Copilot Studio Update Your Community Profile Today! Keep your community profile up to date which is essential for staying connected and engaged with the community. Community Links: ○ Power Apps ○ Power Automate ○ Power Pages ○ Copilot Studio Thank you for being an integral part of our journey. Here's to many more Tuesday Tips as we pave the way for a brighter, more connected future! As always, watch the News & Announcements for the next set of tips, coming soon!

Calling all User Group Leaders and Super Users! Mark Your Calendars for the next Community Ambassador Call on May 9th!

This month's Community Ambassador call is on May 9th at 9a & 3p PDT. Please keep an eye out in your private messages and Teams channels for your invitation. There are lots of exciting updates coming to the Community, and we have some exclusive opportunities to share with you! As always, we'll also review regular updates for User Groups, Super Users, and share general information about what's going on in the Community. Be sure to register & we hope to see all of you there!

April 2024 Community Newsletter

We're pleased to share the April Community Newsletter, where we highlight the latest news, product releases, upcoming events, and the amazing work of our outstanding Community members. If you're new to the Community, please make sure to follow the latest News & Announcements and check out the Community on LinkedIn as well! It's the best way to stay up-to-date with all the news from across Microsoft Power Platform and beyond. COMMUNITY HIGHLIGHTS Check out the most active community members of the last month! These hardworking members are posting regularly, answering questions, kudos, and providing top solutions in their communities. We are so thankful for each of you--keep up the great work! If you hope to see your name here next month, follow these awesome community members to see what they do! Power AppsPower AutomateCopilot StudioPower PagesWarrenBelzDeenujialexander2523ragavanrajanLaurensMManishSolankiMattJimisonLucas001AmikcapuanodanilostephenrobertOliverRodriguestimlAndrewJManikandanSFubarmmbr1606VishnuReddy1997theMacResolutionsVishalJhaveriVictorIvanidzejsrandhawahagrua33ikExpiscornovusFGuerrero1PowerAddictgulshankhuranaANBExpiscornovusprathyooSpongYeNived_Nambiardeeksha15795apangelesGochixgrantjenkinsvasu24Mfon LATEST NEWS Business Applications Launch Event - On Demand In case you missed the Business Applications Launch Event, you can now catch up on all the announcements and watch the entire event on-demand inside Charles Lamanna's latest cloud blog. This is your one stop shop for all the latest Copilot features across Power Platform and #Dynamics365, including first-hand looks at how companies such as Lenovo, Sonepar, Ford Motor Company, Omnicom and more are using these new capabilities in transformative ways. Click the image below to watch today! Power Platform Community Conference 2024 is here! It's time to look forward to the next installment of the Power Platform Community Conference, which takes place this year on 18-20th September 2024 at the MGM Grand in Las Vegas! Come and be inspired by Microsoft senior thought leaders and the engineers behind the #PowerPlatform, with Charles Lamanna, Sangya Singh, Ryan Cunningham, Kim Manis, Nirav Shah, Omar Aftab and Leon Welicki already confirmed to speak. You'll also be able to learn from industry experts and Microsoft MVPs who are dedicated to bridging the gap between humanity and technology. These include the likes of Lisa Crosbie, Victor Dantas, Kristine Kolodziejski, David Yack, Daniel Christian, Miguel Félix, and Mats Necker, with many more to be announced over the coming weeks. Click here to watch our brand-new sizzle reel for #PPCC24 or click the image below to find out more about registration. See you in Vegas! Power Up Program Announces New Video-Based Learning Hear from Principal Program Manager, Dimpi Gandhi, to discover the latest enhancements to the Microsoft #PowerUpProgram. These include a new accelerated video-based curriculum crafted with the expertise of Microsoft MVPs, Rory Neary and Charlie Phipps-Bennett. If you’d like to hear what’s coming next, click the image below to find out more! UPCOMING EVENTS Microsoft Build - Seattle and Online - 21-23rd May 2024 Taking place on 21-23rd May 2024 both online and in Seattle, this is the perfect event to learn more about low code development, creating copilots, cloud platforms, and so much more to help you unleash the power of AI. There's a serious wealth of talent speaking across the three days, including the likes of Satya Nadella, Amanda K. Silver, Scott Guthrie, Sarah Bird, Charles Lamanna, Miti J., Kevin Scott, Asha Sharma, Rajesh Jha, Arun Ulag, Clay Wesener, and many more. And don't worry if you can't make it to Seattle, the event will be online and totally free to join. Click the image below to register for #MSBuild today! European Collab Summit - Germany - 14-16th May 2024 The clock is counting down to the amazing European Collaboration Summit, which takes place in Germany May 14-16, 2024. #CollabSummit2024 is designed to provide cutting-edge insights and best practices into Power Platform, Microsoft 365, Teams, Viva, and so much more. There's a whole host of experts speakers across the three-day event, including the likes of Vesa Juvonen, Laurie Pottmeyer, Dan Holme, Mark Kashman, Dona Sarkar, Gavin Barron, Emily Mancini, Martina Grom, Ahmad Najjar, Liz Sundet, Nikki Chapple, Sara Fennah, Seb Matthews, Tobias Martin, Zoe Wilson, Fabian Williams, and many more. Click the image below to find out more about #ECS2024 and register today! Microsoft 365 & Power Platform Conference - Seattle - 3-7th June If you're looking to turbo boost your Power Platform skills this year, why not take a look at everything TechCon365 has to offer at the Seattle Convention Center on June 3-7, 2024. This amazing 3-day conference (with 2 optional days of workshops) offers over 130 sessions across multiple tracks, alongside 25 workshops presented by Power Platform, Microsoft 365, Microsoft Teams, Viva, Azure, Copilot and AI experts. There's a great array of speakers, including the likes of Nirav Shah, Naomi Moneypenny, Jason Himmelstein, Heather Cook, Karuana Gatimu, Mark Kashman, Michelle Gilbert, Taiki Y., Kristi K., Nate Chamberlain, Julie Koesmarno, Daniel Glenn, Sarah Haase, Marc Windle, Amit Vasu, Joanne C Klein, Agnes Molnar, and many more. Click the image below for more #Techcon365 intel and register today! For more events, click the image below to visit the Microsoft Community Days website.

Tuesday Tip | Update Your Community Profile Today!

It's time for another TUESDAY TIPS, your weekly connection with the most insightful tips and tricks that empower both newcomers and veterans in the Power Platform Community! Every Tuesday, we bring you a curated selection of the finest advice, distilled from the resources and tools in the Community. Whether you’re a seasoned member or just getting started, Tuesday Tips are the perfect compass guiding you across the dynamic landscape of the Power Platform Community. We're excited to announce that updating your community profile has never been easier! Keeping your profile up to date is essential for staying connected and engaged with the community. Check out the following Support Articles with these topics: Accessing Your Community ProfileRetrieving Your Profile URLUpdating Your Community Profile Time ZoneChanging Your Community Profile Picture (Avatar)Setting Your Date Display Preferences Click on your community link for more information: Power Apps, Power Automate, Power Pages, Copilot Studio Thank you for being an active part of our community. Your contributions make a difference! Best Regards, The Community Management Team

Hear what's next for the Power Up Program

Hear from Principal Program Manager, Dimpi Gandhi, to discover the latest enhancements to the Microsoft #PowerUpProgram, including a new accelerated video-based curriculum crafted with the expertise of Microsoft MVPs, Rory Neary and Charlie Phipps-Bennett. If you’d like to hear what’s coming next, click the link below to sign up today! https://aka.ms/PowerUp

Super User of the Month | Ahmed Salih

We're thrilled to announce that Ahmed Salih is our Super User of the Month for April 2024. Ahmed has been one of our most active Super Users this year--in fact, he kicked off the year in our Community with this great video reminder of why being a Super User has been so important to him! Ahmed is the Senior Power Platform Architect at Saint Jude's Children's Research Hospital in Memphis. He's been a Super User for two seasons and is also a Microsoft MVP! He's celebrating his 3rd year being active in the Community--and he's received more than 500 kudos while authoring nearly 300 solutions. Ahmed's contributions to the Super User in Training program has been invaluable, with his most recent session with SUIT highlighting an incredible amount of best practices and tips that have helped him achieve his success. Ahmed's infectious enthusiasm and boundless energy are a key reason why so many Community members appreciate how he brings his personality--and expertise--to every interaction. With all the solutions he provides, his willingness to help the Community learn more about Power Platform, and his sheer joy in life, we are pleased to celebrate Ahmed and all his contributions! You can find him in the Community and on LinkedIn. Congratulations, Ahmed--thank you for being a SUPER user!