- Microsoft Power Automate Community

- Welcome to the Community!

- News & Announcements

- Get Help with Power Automate

- General Power Automate Discussion

- Using Connectors

- Building Flows

- Using Flows

- Power Automate Desktop

- Process Mining

- AI Builder

- Power Automate Mobile App

- Translation Quality Feedback

- Connector Development

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read Only)

- Power Platform and Dynamics 365 Integrations (Read Only)

- Galleries

- Community Connections & How-To Videos

- Webinars and Video Gallery

- Power Automate Cookbook

- Events

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Community Blog

- Power Automate Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

- Microsoft Power Automate Community

- Galleries

- Power Automate Cookbook

- Re: Import Tab Delimited File

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Import Tab Delimited File

Title: Import Tab Delimited File

Description: This flow allows you to import tab delimited files into a destination table.

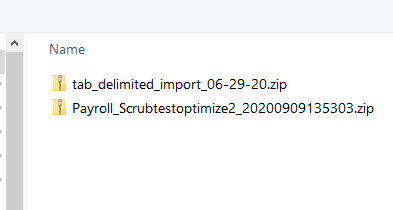

Detailed Instructions: Import the package attached below into your own environment.

You need to change the action where I get the file content from, such as sharepoint, onedrive, or other storage locations.

Please read the comments within the flow steps, they should explain using this flow.

If you have number types remove the quotes (around the variables) at this step where the items are appended.

Notice all my variables have quotes and are all string type. If one were a number type it would not have quotes.

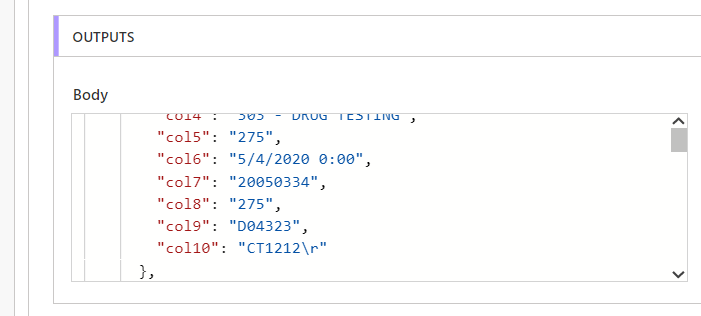

All column names should remain with quotes. You will also rename your columns here and add or remove.

Just keep the format - comma after each column name : data item except the last one.

Also make note of how each column is accessed here with the formula variables('varname')[0], then variables('varname')[1] ... and so on where [0] is the place of the column in your data in order starting from 0.

zero is one, 1 is two, 2 is three, and so on.

You will need to modify the JSON schema to match your column names and types, you should be able to see where the column names and types are within the properties brackets.

There is no need to rebuild the entire schema, simply rename the columns and change their type if needed.

Also add and remove columns, as this JSON will allow you to select the columns dynamically for writing your data.

The current column names are col0, col1, col2, etc... and I set them all type string.

The names and types should match your appended json from image above.

I don't have memorized all the types but there is string, integer, float? maybe? and other and will need to be looked up.

This step will control how many records you are going to process.

The flow is set up to process 100 rows and should not be changed or due to the nesting of loops it will go over the limit.

You should change the number of loops though. In the pic it is set it to 5 loops. That's 5 x 100 rows = 500 rows.

So if you have 8,000 rows you would have 80 loops.

Questions: If you have any issues running it, most likely I can figure it out for you.

Anything else we should know: You can easily change the trigger type to be scheduled, manual, or when a certain event occurs.

The tab delimited file file must be text based as in saved as tab delimited text format.

If your system or excel allows you to export or save as text tab delimited, this should be the correct file type.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi - This flow only works if my text file has one row. I think I have an problem with the carriage return. The error message I get is below. It is adding a \r into the JSON.

The 'content' property of actions of type 'ParseJson' must be valid JSON. The provided value '{

"rows":{

"items":[{"FY Wk":"2020W51","QTR":"2020Q4","QTR Age":"0","Group ID":"1RED","Part Num":"1174500","IPN":"ROSE","Location":"CHI","Capacity":"2000","SWAP Commit":"100","ABC":"100","BRD":"100\r"}{"FY Wk":"2020W51","QTR":"2020Q4","QTR Age":"0","Group ID":"1BLUE","Part Num":"1654500","IPN":"TULIP","Location":"DEN","Capacity":"2000","SWAP Commit":"100","ABC":"100","BRD":"100\r"}{"FY Wk":"2020W51","QTR":"2020Q4","QTR Age":"0","Group ID":"1GREEN","Part Num":"1234500","IPN":"ROSE","Location":"TOL","Capacity":"2000","SWAP Commit":"100","ABC":"100","BRD":"100"}

]

}}' cannot be parsed: 'After parsing a value an unexpected character was encountered: {. Path 'rows.items[0]', line 3, position 192.'.

Any ideas on how to fix?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @Anonymous

I don't believe the "\r" is causing the issue.

I have run a test and the "\r" is being processed correctly as a return.

I'm thinking there really is a character causing the issue. Anyhow, you would need to find line 3 and count up to character 192.

Don't do that though. I'm uploading a new version today that I've been working on.

It will remove the \r before it is written and will also handle processing more than the limit rows of 5000.

My run with "\r"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous

I looked at your data closer and the items do not have a comma separating them.

Something is not correct in the original file?

After the tab file is processed and placed in json array they are separated by commas then.

It is possible though that version of the automation did not work correctly because my first files did not have "\r" in them so I could not have the problem. This version though handles the "\r" which could be the issue.

I've uploaded a new version of the automation.

It has changes mainly to handle larger data sets.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the response... been busy so haven't had a chance to try the new version. In my raw data I have a CR/LF according to Notepad++. Not sure where the comma comes from?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This solution MIGHT be exactly what I need in order to finally finish a project that's taken me about 2 weeks... BUT when I try to import the solution to Power Automate, I get an error:

The solution file is invalid. The compressed file must contain the following files at its root: solution.xml, customizations.xml, and [Content_Types].xml. Customization files exported from previous versions of Microsoft Dynamics 365 are not supported.

Is the solution zip file from a previous version? I've done some google searches and am still having issues.

Also, thank you so much for posting solutions. I've come across other posts of yours that have been helpful.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Success.

Thanks for coming back.

Apologies but I followed the instructions here which were not appropriate.

https://docs.microsoft.com/en-us/power-automate/import-flow-solution

My mistake

pete